The Problem

In auto component and catalytic converter manufacturing, metallic cylinders carry engraved identification markings — part numbers, batch codes, direction indicators. These markings are stamped directly into the metal surface. No ink. No paint. Just metal on metal.

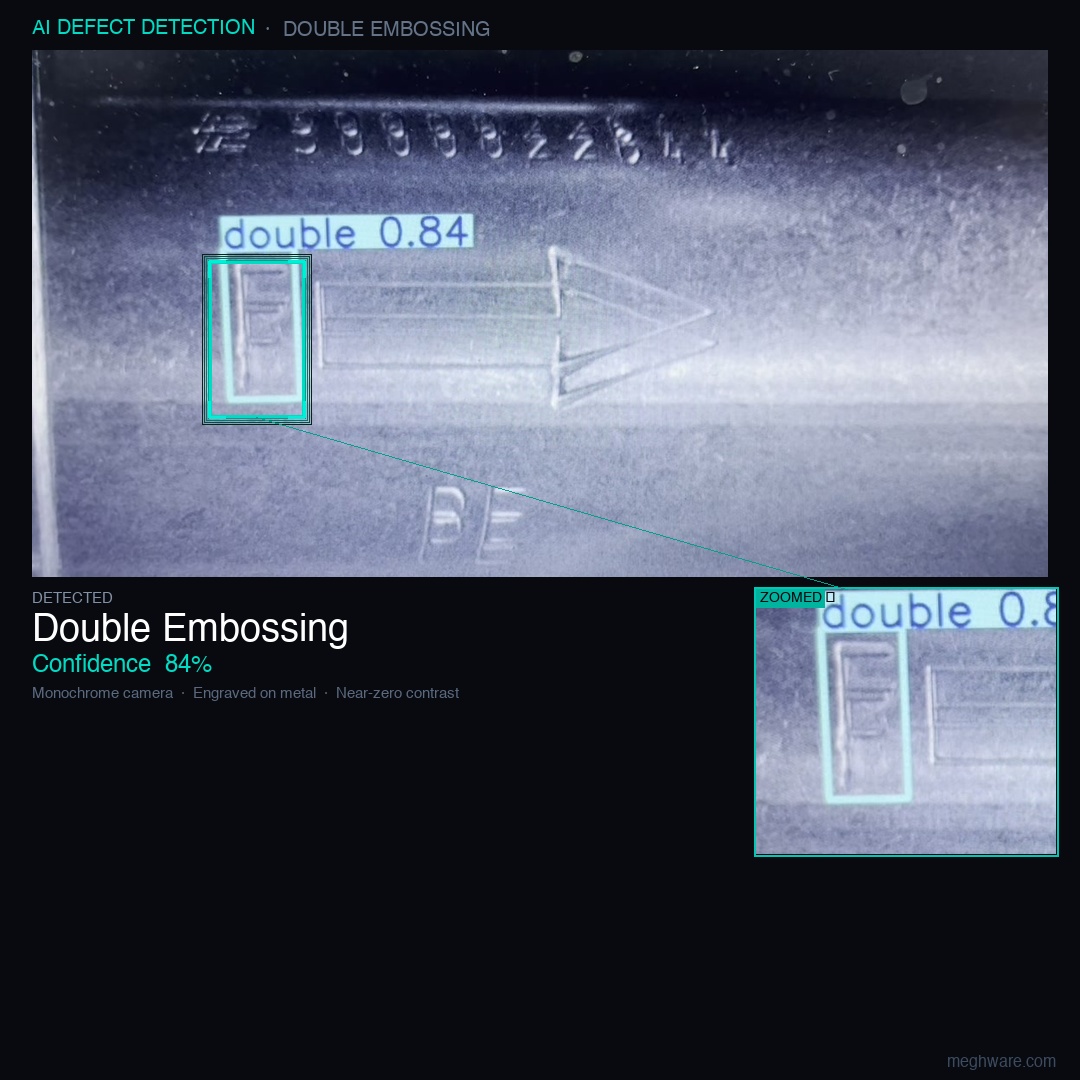

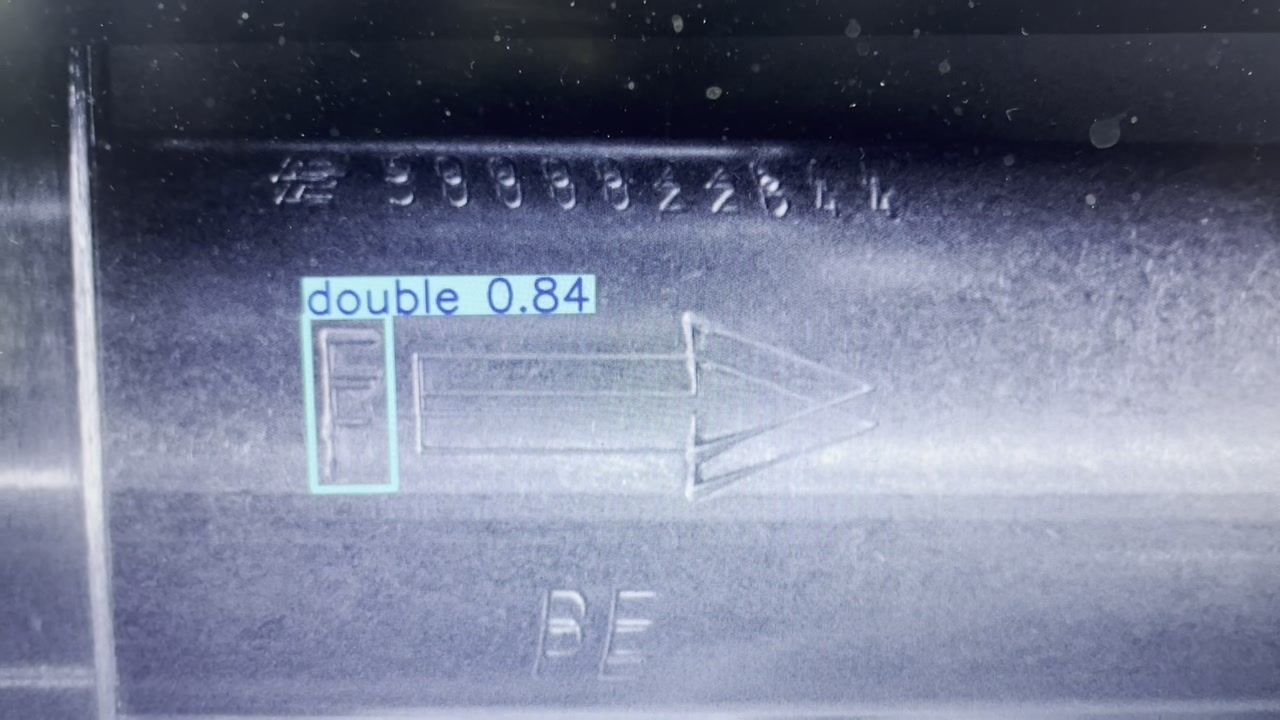

A double embossing defect occurs when the stamping machine strikes the same part twice — usually due to a jig misfire or a part that didn't eject cleanly after the first strike. The result: two overlapping impressions, slightly offset, at the same location. The part looks almost identical to a correctly stamped one. It isn't.

Shipping a double-embossed part downstream creates traceability failures — a batch number that doesn't scan cleanly, a direction marking that's ambiguous, or a serialisation error that cascades into a recall. The client wanted automated detection before parts left the line.

The ask was straightforward: detect double embossing on metallic cylinders in real time, reliably, without slowing the line. What wasn't straightforward was everything else.

The Challenge: When Physics Gets in the Way

Engraved markings on polished metal are, to a standard camera, nearly invisible. There is no colour difference — it's the same metal. There is no significant brightness difference — the engraving is shallow. What exists is a tiny variation in the way light reflects off the recessed surface versus the surrounding area. Under normal lighting, that variation is well below the noise floor of a typical industrial camera.

This is where most computer vision projects for metallic surfaces fail at the first step — not because the model is wrong, but because the image doesn't contain the information needed to detect anything. A model can only work with what the image gives it.

The specific challenges we faced:

- Reflective surface: Polished metal creates specular highlights that shift with every tiny change in part orientation. What looks like a marking in one frame is a glare spot in the next.

- Near-zero contrast: Engraved characters on same-metal background produce contrast differences of just a few pixel values on an 8-bit grayscale image.

- Double impression subtlety: The defect isn't the marking itself — it's a ghost impression alongside the correct one. Two nearly identical marks, overlapping slightly. Even under ideal conditions, distinguishing one from two requires fine-grained spatial resolution.

- Real-time constraint: Processing had to happen on-site, on an edge device, fast enough to flag parts before they moved to the next station.

The Approach

Step 1 — Getting the image right

Before writing a single line of model code, we spent significant time on the image acquisition pipeline. This is the step most people skip, and it's the reason most metallic surface CV projects fail.

The key insight: engraved markings create directional shadows. If you illuminate the surface at a shallow, raking angle — light almost parallel to the surface — the recessed areas cast tiny shadows that a monochrome camera can capture. This is a well-known technique in metrology, but the angle matters enormously. Too steep and you lose the shadows. Too shallow and you get glare.

We ran a systematic series of lighting angle tests, capturing images across a range of configurations, and evaluated each frame's contrast histogram before committing to a setup. The final configuration used a monochrome camera with a controlled raking light source — no colour information needed, no expensive hyperspectral setup, just geometry.

Step 2 — Preprocessing

Even with optimised lighting, the raw images had noise. We applied a targeted preprocessing pipeline:

- Contrast-limited adaptive histogram equalisation (CLAHE) to locally enhance contrast without blowing out highlights

- Gaussian blur for noise suppression before edge amplification

- Region-of-interest cropping to focus inference on the marking zone only

The goal at this stage was simple: make the double impression visible to a human looking at the processed frame. If a human can't see it, the model won't find it either.

Step 3 — Model training and detection

With a workable image pipeline, we trained a YOLO-based object detection model on labelled examples of correctly stamped and double-stamped parts. The training set included parts at various rotational positions and lighting micro-variations to build robustness.

The model was trained to detect and classify the marking region — not just "is there a marking?" but "is this a single impression or a double?" — a classification problem wrapped inside a detection one.

The Result

The proof-of-concept ran live on the shop floor, on a standard laptop, directly connected to the camera. No cloud. No latency. Each part was scanned, processed, and classified in under 200 milliseconds.

The 84% confidence score on the detected defect reflects the difficulty of the underlying image — this isn't a case where the model sees a clearly distinct object. It's finding a subtle spatial anomaly in near-uniform pixel data. At production scale, with a calibrated imaging setup and a larger training set, we expect this to push well above 90%.

The right question isn't "how accurate is the model?" — it's "how good is the image?" Get the image right and the model accuracy follows.

What We Learned

This project reinforced something we believe strongly at Meghware: in industrial computer vision, the hard problem is almost never the AI.

The model architecture, the training framework, the inference pipeline — these are solved problems with well-understood tooling. What isn't solved is knowing which lighting angle reveals a 0.3mm engraving on polished steel, or how to preprocess an image that looks like grey on grey to extract meaningful features.

The best computer vision engineers we know spend most of their time on optics, lighting, and preprocessing — not on the neural network.

For manufacturers considering automated visual inspection: start with the camera and the light. Everything else is downstream of that decision.

Working on a similar problem?

If you have a QC challenge that seems too subtle for automation — reflective surfaces, low-contrast markings, high-speed lines — that's exactly the kind of problem we want to look at.

Talk to Us